Upload Artifacts to GCS

You can use the Upload Artifacts to GCS step in your CI pipelines to upload artifacts to Google Cloud Storage (GCS). For more information on GCS, go to the Google Cloud documentation on Uploads and downloads.

You need:

- Access to a GCS instance.

- A CI pipeline with a Build stage.

- Steps in your pipeline that generate artifacts to upload, such as by running tests or building code. The steps you use depend on what artifacts you ultimately want to upload.

- A GCP connector.

You can also upload artifacts to S3, upload artifacts to JFrog, and upload artifacts to Sonatype Nexus.

Add an Upload Artifacts to GCS step

In your pipeline's Build stage, add an Upload Artifacts to GCS step and configure the settings accordingly.

Here is a YAML example of a minimum Upload Artifacts to GCS step.

- step:

type: GCSUpload

name: upload report

identifier: upload_report

spec:

connectorRef: YOUR_GCP_CONNECTOR_ID

bucket: YOUR_GCS_BUCKET

sourcePath: path/to/source

target: path/to/upload/location

Upload Artifacts to GCS step settings

The Upload Artifacts to GCS step has the following settings. Depending on the stage's build infrastructure, some settings might be unavailable or optional. Settings specific to containers, such as Set Container Resources, are not applicable when using a VM or Harness Cloud build infrastructure.

Name

Enter a name summarizing the step's purpose. Harness automatically assigns an Id (Entity Identifier) based on the Name. You can change the Id.

GCP Connector

The Harness connector for the GCP account where you want to upload the artifact. For more information, go to Google Cloud Platform (GCP) connector settings reference. This step supports GCP connectors that use access key authentication. It does not support GCP connectors that inherit delegate credentials.

Bucket

The GCS destination bucket name.

Source Path

Path to the file or directory that you want to upload.

If you want to upload a compressed file, you must use a Run step to compress the artifact before uploading it.

Target

Provide a path, relative to the Bucket, where you want to store the artifact. Do not include the bucket name; you specified this in Bucket.

If you don't specify a Target, Harness uploads the artifact to the bucket's main directory.

Run as User

Specify the user ID to use to run all processes in the pod, if running in containers. For more information, go to Set the security context for a pod.

Set container resources

Set maximum resource limits for the resources used by the container at runtime:

- Limit Memory: The maximum memory that the container can use. You can express memory as a plain integer or as a fixed-point number using the suffixes

GorM. You can also use the power-of-two equivalentsGiandMi. The default is500Mi. - Limit CPU: The maximum number of cores that the container can use. CPU limits are measured in CPU units. Fractional requests are allowed; for example, you can specify one hundred millicpu as

0.1or100m. The default is400m. For more information, go to Resource units in Kubernetes.

Timeout

Set the timeout limit for the step. Once the timeout limit is reached, the step fails and pipeline execution continues. To set skip conditions or failure handling for steps, go to:

View artifacts on the Artifacts tab

You can use the Artifact Metadata Publisher plugin to publish artifacts to the Artifacts tab. To do this, add a Plugin step after the Upload Artifacts to GCS step.

- Visual

- YAML

Configure the Plugin step settings as follows:

- Name: Enter a name.

- Container Registry: Select a Docker connector.

- Image: Enter

plugins/artifact-metadata-publisher. - Settings: Add the following two settings as key-value pairs.

file_urls: Provide a GCS URL that uses the Bucket, Target, and artifact name specified in the Upload Artifacts to GCS step, such ashttps://storage.googleapis.com/GCS_BUCKET_NAME/TARGET_PATH/ARTIFACT_NAME_WITH_EXTENSION. If you uploaded multiple artifacts, you can provide a list of URLs.artifact_file: Provide any.txtfile name, such asartifact.txtorurl.txt. This is a required setting that Harness uses to store the artifact URL and display it on the Artifacts tab. This value is not the name of your uploaded artifact, and it has no relationship to the artifact object itself.

Add a Plugin step that uses the artifact-metadata-publisher plugin.

- step:

type: Plugin

name: publish artifact metadata

identifier: publish_artifact_metadata

spec:

connectorRef: account.harnessImage

image: plugins/artifact-metadata-publisher

settings:

file_urls: https://storage.googleapis.com/GCS_BUCKET_NAME/TARGET_PATH/ARTIFACT_NAME_WITH_EXTENSION

artifact_file: artifact.txt

connectorRef: Use the built-in Docker connector (account.harness.Image) or specify your own Docker connector.image: Must beplugins/artifact-metadata-publisher.file_urls: Provide a GCS URL that uses thebucket,target, and artifact name specified in the Upload Artifacts to GCS step, such ashttps://storage.googleapis.com/GCS_BUCKET_NAME/TARGET_PATH/ARTIFACT_NAME_WITH_EXTENSION. If you uploaded multiple artifacts, you can provide a list of URLs.artifact_file: Provide any.txtfile name, such asartifact.txtorurl.txt. This is a required setting that Harness uses to store the artifact URL and display it on the Artifacts tab. This value is not the name of your uploaded artifact, and it has no relationship to the artifact object itself.

Build logs and artifact files

When you run the pipeline, you can observe the step logs on the build details page. If the Upload Artifacts step succeeds, you can find the artifact on GCS. If you used the Artifact Metadata Publisher plugin, you can find the artifact URL on the Artifacts tab.

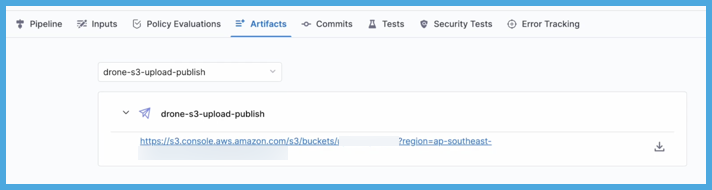

On the Artifacts tab, select the step name to expand the list of artifact links associated with that step.

If your pipeline has multiple steps that upload artifacts, use the dropdown menu on the Artifacts tab to switch between lists of artifacts uploaded by different steps.

Pipeline YAML examples

- Harness Cloud

- Self-managed

This example pipeline uses Harness Cloud build infrastructure. It produces test reports, uploads the reports to GCS, and uses the Artifact Metadata Publisher to publish the artifact URL on the Artifacts tab.

pipeline:

name: default

identifier: default

projectIdentifier: default

orgIdentifier: default

tags: {}

properties:

ci:

codebase:

connectorRef: YOUR_CODEBASE_CONNECTOR_ID

repoName: YOUR_CODE_REPO_NAME

build: <+input>

stages:

- stage:

name: test and upload artifact

identifier: test_and_upload_artifact

description: ""

type: CI

spec:

cloneCodebase: true

platform:

os: Linux

arch: Amd64

runtime:

type: Cloud

spec: {}

execution:

steps:

- step: ## Generate test reports.

type: RunTests

name: runTestsWithIntelligence

identifier: runTestsWithIntelligence

spec:

connectorRef: account.GCR

image: maven:3-openjdk-8

args: test -Dmaven.test.failure.ignore=true -DfailIfNoTests=false

buildTool: Maven

language: Java

packages: org.apache.dubbo,com.alibaba.dubbo

runOnlySelectedTests: true

reports:

type: JUnit

spec:

paths:

- "target/surefire-reports/*.xml"

- step: ## Upload reports to GCS.

type: GCSUpload

name: upload report

identifier: upload_report

spec:

connectorRef: YOUR_GCP_CONNECTOR_ID

bucket: YOUR_GCS_BUCKET

sourcePath: target/surefire-reports

target: <+pipeline.sequenceId>

- step: ## Show artifact URL on the Artifacts tab.

type: Plugin

name: publish artifact metadata

identifier: publish_artifact_metadata

spec:

connectorRef: account.harnessImage

image: plugins/artifact-metadata-publisher

settings:

file_urls: https://storage.googleapis.com/YOUR_GCS_BUCKET/<+pipeline.sequenceId>/surefure-reports/

artifact_file: artifact.txt

This example pipeline uses a Kubernetes cluster build infrastructure. It produces test reports, uploads the reports to GCS, and uses the Artifact Metadata Publisher to publish the artifact URL on the Artifacts tab.

pipeline:

name: allure-report-upload

identifier: allurereportupload

projectIdentifier: YOUR_HARNESS_PROJECT_ID

orgIdentifier: default

tags: {}

properties:

ci:

codebase:

connectorRef: YOUR_CODEBASE_CONNECTOR_ID

repoName: YOUR_CODE_REPO_NAME

build: <+input>

stages:

- stage:

name: build

identifier: build

description: ""

type: CI

spec:

cloneCodebase: true

infrastructure:

type: KubernetesDirect

spec:

connectorRef: YOUR_KUBERNETES_CLUSTER_CONNECTOR_ID

namespace: YOUR_KUBERNETES_NAMESPACE

automountServiceAccountToken: true

nodeSelector: {}

os: Linux

execution:

steps:

- step: ## Generate test reports.

type: RunTests

name: runTestsWithIntelligence

identifier: runTestsWithIntelligence

spec:

connectorRef: account.GCR

image: maven:3-openjdk-8

args: test -Dmaven.test.failure.ignore=true -DfailIfNoTests=false

buildTool: Maven

language: Java

packages: org.apache.dubbo,com.alibaba.dubbo

runOnlySelectedTests: true

reports:

type: JUnit

spec:

paths:

- "target/surefire-reports/*.xml"

- step: ## Upload reports to GCS.

type: GCSUpload

name: upload report

identifier: upload_report

spec:

connectorRef: YOUR_GCP_CONNECTOR_ID

bucket: YOUR_GCS_BUCKET

sourcePath: target/surefire-reports

target: <+pipeline.sequenceId>

- step: ## Show artifact URL on the Artifacts tab.

type: Plugin

name: publish artifact metadata

identifier: publish_artifact_metadata

spec:

connectorRef: account.harnessImage

image: plugins/artifact-metadata-publisher

settings:

file_urls: https://storage.googleapis.com/YOUR_GCS_BUCKET/<+pipeline.sequenceId>/surefire-reports/

artifact_file: artifact.txt

Download Artifacts from GCS

You can use the GCS Drone plugin to download artifacts from GCS. This is the same plugin image that Harness CI uses to run the Upload Artifacts to GCS step. To do this,add a Plugin step to your CI pipeline. For example:

- step:

type: Plugin

name: download

identifier: download

spec:

connectorRef: YOUR_DOCKER_CONNECTOR

image: plugins/gcs

settings:

token: <+secrets.getValue("gcpserviceaccounttoken")>

source: YOUR_BUCKET_NAME/DIRECTORY

target: path/to/download/destination

download: "true"

Configure the Plugin step settings as follows:

| Keys | Type | Description | Value example |

|---|---|---|---|

connectorRef | String | Select a Docker connector. Harness uses this connector to pull the plugin image. | account.harnessImage |

image | String | Enter plugins/gcs. | plugins/gcs |

token | String | Reference to a Harness text secret containing a GCP service account token to connect and authenticate to GCS. | <+secrets.getValue("gcpserviceaccounttoken")> |

source | String | The directory to download from your GCS bucket, specified as BUCKET_NAME/DIRECTORY. | my_cool_bucket/artifacts |

target | String | Path to the location where you want to store the downloaded artifacts, relative to the build workspace. | artifacts (downloads to /harness/artifacts) |

download | Boolean | Must be true to enable downloading. If omitted or false, the plugin attempts to upload artifacts instead. | "true" |

Troubleshoot uploading artifacts

Go to the CI Knowledge Base for questions and issues related uploading artifacts, such as: