Metrics

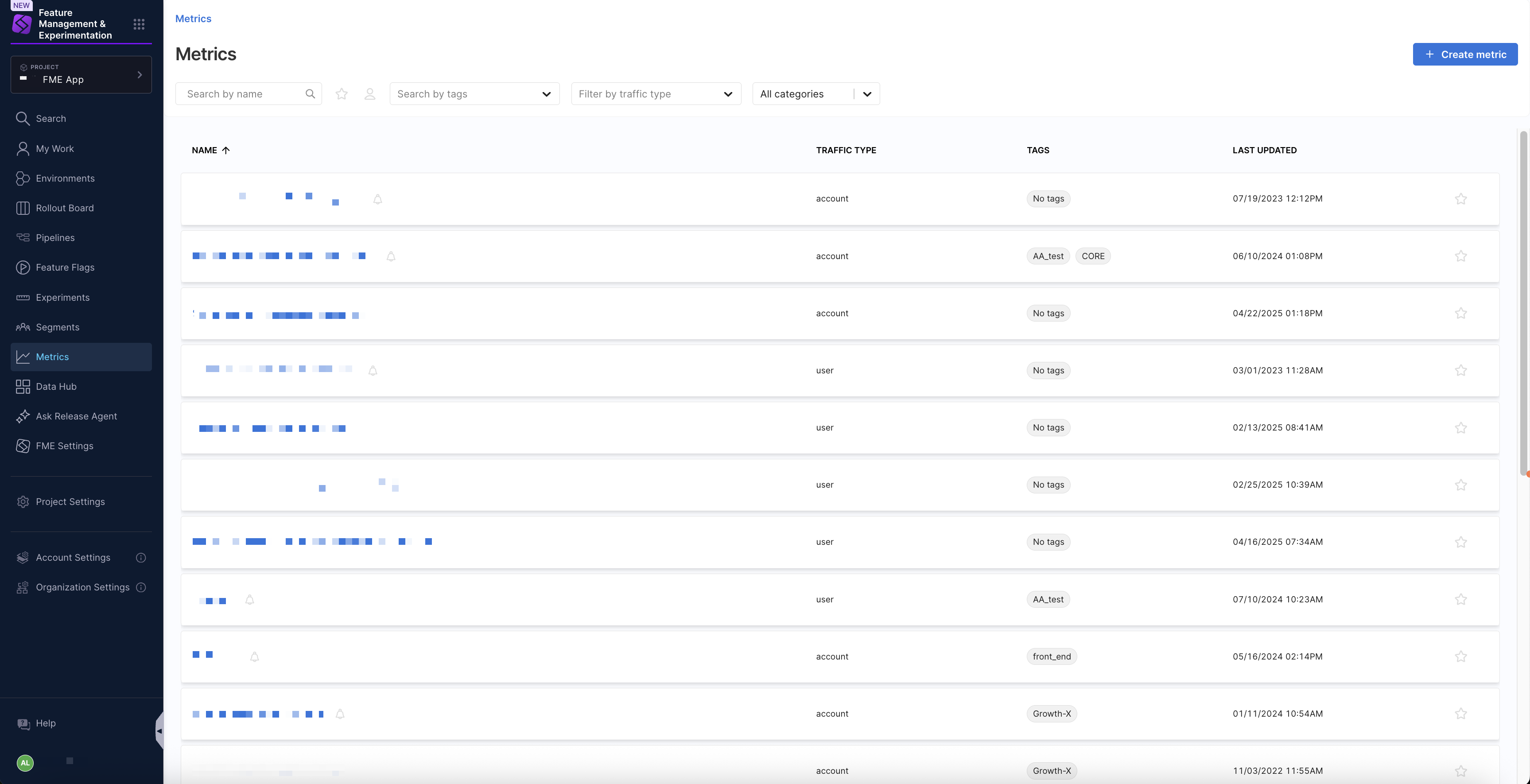

The Metrics page in Harness Feature Management & Experimentation (FME) provides a centralized list view for searching and managing your metricsA metric measures events that are sent to Harness FME and can count the occurrence of events, measure event values, or measure event properties. Metrics are used to evaluate the impact of feature flags and experiments on user behavior and system performance.. You can browse metrics created in Harness FME, scan key details such as traffic type, tags, ownership, and last updated time, and navigate directly to metric definitions, alert policies, and audit logs.

Metric results are calculated per treatment for feature flagsA feature flag is a conditional toggle in Harness FME that enables or disables specific functionality without deploying new code. It allows for controlled feature rollouts, A/B testing, and quick rollbacks if issues arise. that share the same traffic type and use percentage-based targeting rules, allowing you to compare impact between a baseline and comparison treatment.

Search and manage metrics

To access metrics created in Harness FME, select Metrics from the FME navigation menu. When viewing the list of metrics, you can use the following filters and indicators to help you navigate to a selected metric.

- Search: Search for metrics by name.

- Starred by me: Show only metrics you’ve starred.

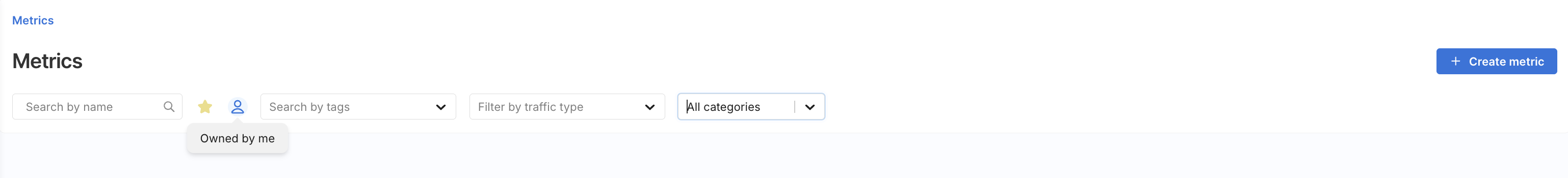

- Owned by me: Show metrics you created or where you are listed as an owner.

- Search by tags: Use the

Search by tagsdropdown menu to filter metrics by one or more tag values. - Filter by traffic type: Narrows results by traffic types such as

user,account, oranonymous. - All categories: Use the All categories dropdown to filter by Guardrail metrics.

Each metric appears as a row in the list with column-based details, including the name, traffic type, tags, and the timestamp it was last updated. If a bell icon appears next to a metric name, the metric has an associated alert policy.

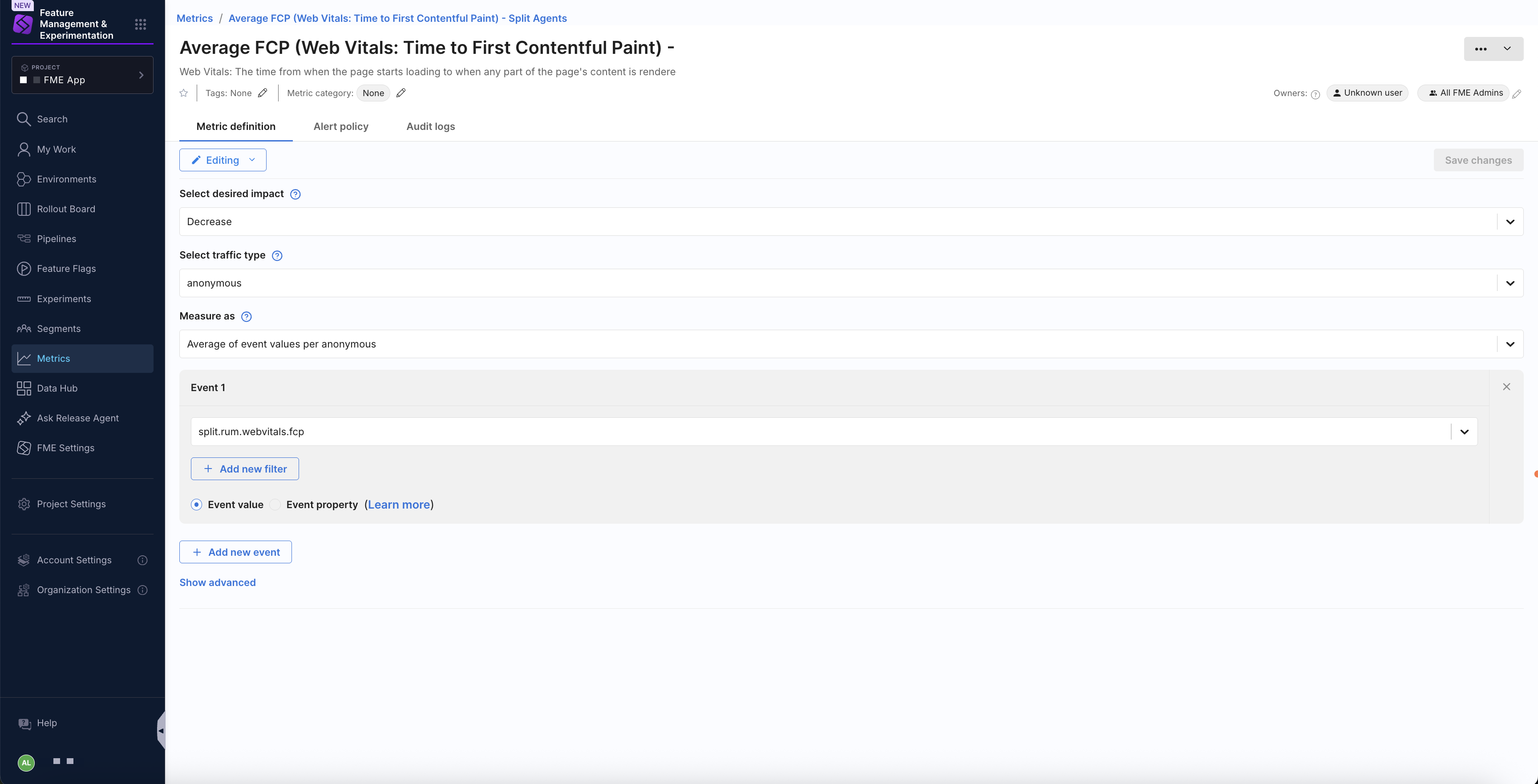

Select a metric to open its details. You can switch between the Metric definition, Alert policies, and Audit logs tabs.

Common metrics

This section outlines common metrics and how to create them in Harness FME, empowering you to effectively measure impact and run experiments. You'll find a breakdown of various metrics, including conversions, page views, and more.

| Metric Guide | Description |

|---|---|

| Conversions | Map, measure, and improve the conversion rate of key user workflows. |

| Errors | Measuring errors alongside feature flags leads to faster issue identification, response, and resolution. |

| Inputs | Track user-entered fields and forms such as radio buttons, checkboxes, sliders, and dropdowns. |

| Interactions | Measure clicks, hover states, scroll depth, and other user interactions. |

| Page Load Performance | Use on-page events such as page load timing and load failures to understand performance. |

| Page Views | Use page view counts and rates in conjunction with other metrics to construct ratios and funnels. |

| Rage Clicks | Identify areas of user frustration by measuring rapidly repeated clicks on an element or area of the screen. |

| Satisfaction | Use feedback response rates, occurrence rates, and scores to understand user happiness. |

| Sessions | Construct engagement metrics such as session start and end, entry and exit rates, and session length. |

| Shopping Cart | Track changes to a shopping cart to measure cart size, value, completion, and abandonment metrics. |

These metrics are designed to help you build impactful products and drive business growth.

Metric types

Harness FME supports the following custom metric types. Metrics are calculated per traffic type key (e.g. per user). This means that each individual key's contribution is calculated and adds a single data point to the distribution of the metric result, so each key has equal weighting in the result.

In the table below, we assume the traffic type selected for the metric is user.

| Function | Description | Example |

|---|---|---|

| Count of events per user | Counts the number of times the event is triggered by your users. Shows the average count. As users revisit your app, they will increase this value, while new users will bring the average down. | The average number of times your users visit a webpage. The average number of support tickets your users create. |

| Sum of event values per user | Adds up the values of the event for your users. Shows the average summed value. As users revisit your app, they will increase this value, while new users will bring the average down. | How much your users spend on average on your website (over the duration of the experiment). The total time your users on average played media on your website. |

| Average of event values per user | Averages the value of the event for your users. Revisiting users will increase the confidence of the result. This calculated result is not expected to change significantly during the experiment (unless experimental factors change). | The average purchase value when your users check out. The average page load time your users experience. |

| Ratio of two events per user | Compares the frequency of two events for your users. Shows the average ratio. Revisiting users will increase the confidence of the result. This calculated result is not expected to change significantly during the experiment (unless experimental factors change). | The average number of hotel searches before a hotel booking. The number of invitations accepted compared with the number of invitations received by your users (on average). The ratio of app sessions with errors compared to app sessions without errors. |

| Percent of unique users | Calculates the percentage of users that triggered the event. As the experiment continues, revisiting users may increase the percentage, while data from new users may increase the confidence of the result. | The percentage of website visitors that completed a purchase. The percentage of users that experienced an error. |

Metric categories

For more information about metric categories, see Metric categorization.