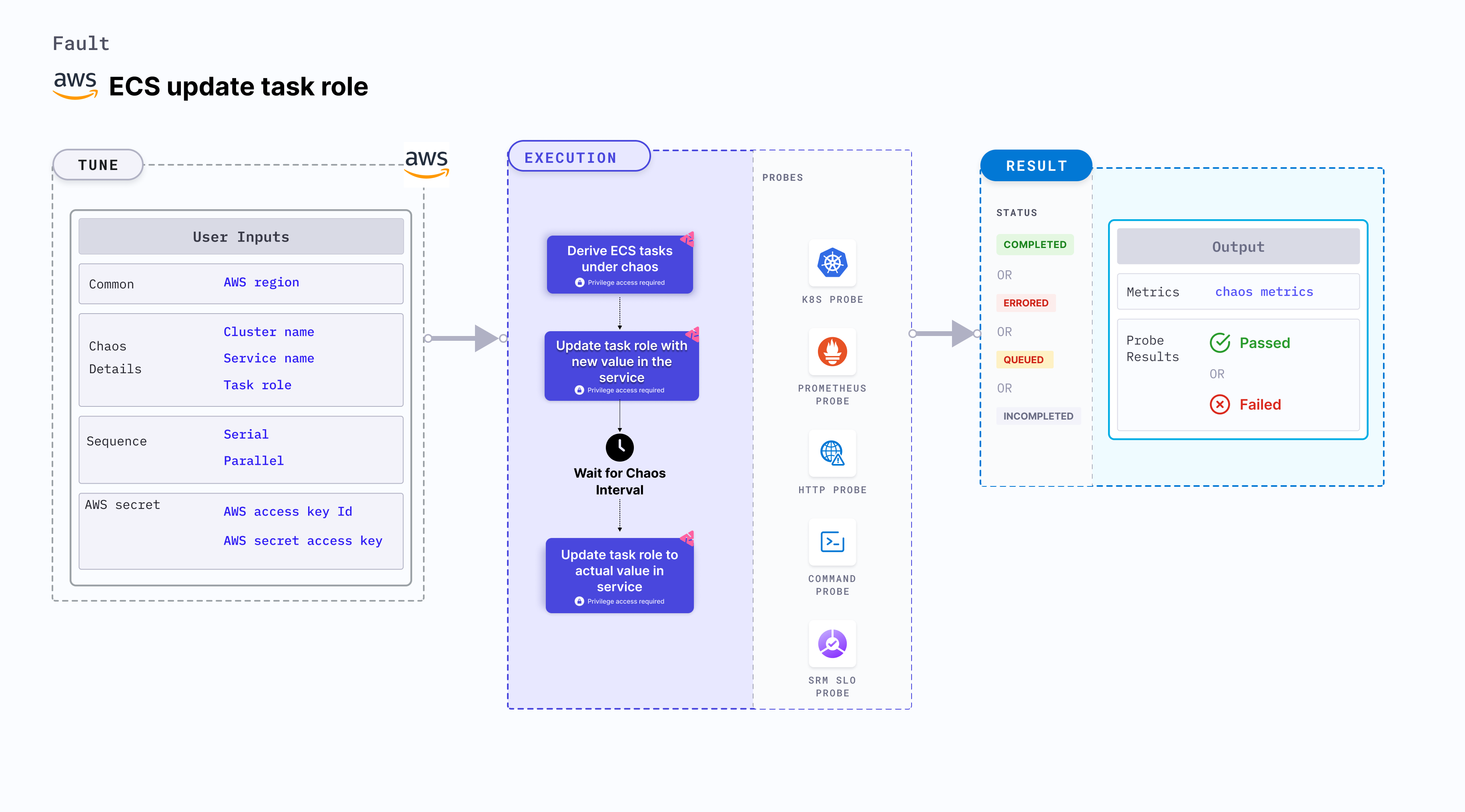

ECS update task role

ECS update task role allows you to modify the IAM task role associated with an Amazon ECS (Elastic Container Service) task. This experiment primarily involves ECS Fargate and doesn't depend on EC2 instances. They focus on altering the state or resources of ECS containers without direct container interaction.

Use cases

ECS update task role:

- Determines the behavior of your ECS tasks when their IAM role is changed.

- Verifies the authorization and access permissions of your ECS tasks under different IAM configurations.

- Modifies the IAM task role associated with a container task by updating the task definition associated with the ECS service or task.

- Simulate scenarios where the IAM role associated with a task is changed, which may impact the authorization and access permissions of the containers running in the task.

- Validates the behavior of your application and infrastructure during simulated IAM role changes, such as:

- Testing how your application handles changes in IAM role permissions and access.

- Verifying the authorization settings of your system when the IAM role is updated.

- Evaluating the impact of changes in IAM roles on the security and compliance of your application.

Modifying the IAM task role using the ECS update task role is an intentional disruption and should be used carefully in controlled environments.

Prerequisites

- Kubernetes >= 1.17

- ECS cluster running with the desired tasks and containers and familiarity with ECS service update and deployment concepts.

- Create a Kubernetes secret that has the AWS access configuration(key) in the

CHAOS_NAMESPACE. Below is a sample secret file:

apiVersion: v1

kind: Secret

metadata:

name: cloud-secret

type: Opaque

stringData:

cloud_config.yml: |-

# Add the cloud AWS credentials respectively

[default]

aws_access_key_id = XXXXXXXXXXXXXXXXXXX

aws_secret_access_key = XXXXXXXXXXXXXXX

HCE recommends that you use the same secret name, that is, cloud-secret. Otherwise, you will need to update the AWS_SHARED_CREDENTIALS_FILE environment variable in the fault template with the new secret name and you won't be able to use the default health check probes.

Below is an example AWS policy to execute the fault.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"ecs:DescribeTasks",

"ecs:DescribeServices",

"ecs:DescribeTaskDefinition",

"ecs:RegisterTaskDefinition",

"ecs:UpdateService",

"ecs:ListTasks",

"ecs:DeregisterTaskDefinition"

],

"Resource": "*"

},

{

"Effect": "Allow",

"Action": [

"iam:PassRole"

],

"Resource": "*"

}

]

}

- Refer to AWS named profile for chaos to use a different profile for AWS faults.

- The ECS containers should be in a healthy state before and after introducing chaos.

- Refer to the common attributes and AWS-specific tunables to tune the common tunables for all faults and AWS-specific tunables.

- Refer to the superset permission/policy to execute all AWS faults.

Mandatory tunables

| Tunable | Description | Notes |

|---|---|---|

| CLUSTER_NAME | Name of the target ECS cluster. | For example, cluster-1. |

| SERVICE_NAME | Name of the ECS service under chaos. | For example, nginx-svc. |

| REGION | Region name of the target ECS cluster | For example, us-east-1. |

Optional tunables

| Tunable | Description | Notes |

|---|---|---|

| TOTAL_CHAOS_DURATION | Duration that you specify, through which chaos is injected into the target resource (in seconds). | Default: 30 s. For more information, go to duration of the chaos. |

| CHAOS_INTERVAL | Interval between successive instance terminations (in seconds). | Default: 30 s. For more information, go to chaos interval. |

| AWS_SHARED_CREDENTIALS_FILE | Path to the AWS secret credentials. | Default: /tmp/cloud_config.yml. |

| TASK_ROLE | Provide a custom chaos role for the ECS Task containers. | Default: no role. For more information, go to task role specification. |

| RAMP_TIME | Period to wait before and after injecting chaos (in seconds). | For example, 30 s. For more information, go to ramp time. |

Task role specification

Task role for the ECS task containers. Tune it by using the TASK_ROLE environment variable.

The following YAML snippet illustrates the use of this environment variable:

# Set task role resource for the target task

apiVersion: litmuschaos.io/v1alpha1

kind: ChaosEngine

metadata:

name: aws-nginx

spec:

engineState: "active"

annotationCheck: "false"

chaosServiceAccount: litmus-admin

experiments:

- name: ecs-update-task-role

spec:

components:

env:

- name: TASK_ROLE

value: 'arn:aws:iam::149554801296:role/ecsTaskExecutionRole'

- name: REGION

value: 'us-east-2'

- name: TOTAL_CHAOS_DURATION

VALUE: '60'